Riots, Rage, and Resistance: A Brief History of How Antibiotics Arrived on the Farm

Early twentieth-century farmers struggled to keep pace with demand

In 1950, American farmers rejoiced at news from a New York laboratory: A team of scientists had discovered that adding antibiotics to livestock feed accelerated animals’ growth and cost less than conventional feed supplements. The news blew “the lid clear off the realm of animal nutrition,” crowed the editors of one farm magazine. Farmers and scientists alike “gasp[ed] with amazement, almost afraid to believe what they had found.” “Never again,” vowed another writer, would farmers suffer the “severe protein shortages” of the past.

Those glad tidings overshadowed contemporaneous warnings about bacterial resistance, most notably from a series of Japanese studies. Researchers had found that bacteria repeatedly exposed to antibiotics possessed an uncanny ability to thwart the very drugs designed to kill them. In 1966, the editors of the New England Journal of Medicine warned that if humanity continued to ignore the reality of bacterial “resistance,” they would “find themselves back in the preantibiotic Middle Ages.” It wasn’t until the early 1970s that some Americans began lobbying to ban antibiotics from the farm, arguing that feeding animals “sub-therapeutic” doses of antibiotics fostered bacterial resistance in meat-eating humans. Alas, science being what it is, for every critic who found evidence of links between antibiotic use in livestock production and antibiotic resistance in humans, another whipped out evidence to the contrary.

Fast forward to 2013: Scientists are still arguing about the dangers of bacterial resistance and the debate about antibiotics as a feed supplement rages on. What’s missing is the history of why antibiotics arrived on the farm — and why farmers then and now have lobbied to keep them there.

####

Understanding that history requires going back to the first two decades of the twentieth century. American farmers, already a minority, could not keep pace with the needs of the urban, non-food-producing majority. Demand routinely outstripped supplies and chronically exorbitant food prices confounded policy makers and enraged consumers. After 1914, World War I intensified both global demand for U. S. foodstuffs and shoppers’ outrage. Complaining about food shortfalls on one hand and high prices on the other became a national pastime, one summed in a phrase Americans of the era coined to capture their grievance: the high cost of living.

Much meat, few customers. Butchers loafed during the 1910 meat protest

Of all the foods whose high price aggravated consumers, none were more infuriating than those at the meat counter. In 1910, millions of Americans joined a national meat boycott to protest prices, and in 1917, just weeks before the U. S. entered World War I, urban protests against the cost of meat resulted in picket lines and shattered butcher shop windows. In the aftermath, Americans encouraged the USDA to fund research aimed at keeping food supplies, and especially meat, on pace with demand.

At first, animal scientists focused on research that would help farmers improve livestock nutrition for single-stomach animals. (Ruminant nutrition posed different problems.) Farmers and scientists alike knew that chickens and hogs were healthier and gained more weight more quickly when their diets contained animal-derived proteins such as fish meal, cod liver oil, or “tankage” (rendering-plant byproducts). Feed those same animals plant-based “vegetable” proteins, on the other hand, and they weighed less at maturity and were more prone to disease, which meant less meat for butchers and higher prices for consumers. But feed manufacturers relied on expensive imports: fish meal from Japan and cod liver oil from Norway. So scientists hunted for alternatives, supplements that would give livestock the same growth boost as animal-derived proteins but at lower cost.

The outbreak of World War II shifted the search from desirable to urgent. Thanks to Pearl Harbor on one hand and the Nazis on the other, supplies of fish meal and cod liver oil vanished. Those losses, along with war-driven scarcities of feedstuffs like corn and soybeans, led to predictable results: Farmers spent more to feed livestock, but their efforts produced relatively scrawny animals, higher prices (and a thriving black market in meat) for civilians, and fewer pounds of protein for troops. Those developments unnerved federal officials and politicians who remembered the meat protests of World War I.

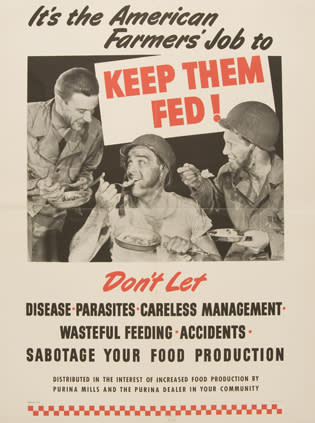

In the 1940s, farming became a patriotic imperative

Prodded by the White House, Pentagon, and farmers, scientists (themselves in short supply as men and women exchanged lab coats for military uniforms) raced to find an animal protein substitute. Some conducted feeding trials using combinations of vitamins, minerals, and amino acids. Others pinned their hopes on sweet potatoes and protein-rich soybeans, of which the U. S. was the world’s second-largest producer. Alas, neither replicated the power of cod liver oil or fish meal.

VE- and VJ-Day came and went with scientists none the wiser, but as the 1940s wound to a close, scientists began closing in on their elusive quarry. In 1948, scientists at pharmaceutical company Merck announced the discovery of vitamin, B12, which they had isolated in animal liver. The news made headlines worldwide because the vitamin would cure pernicious anemia, at the time a global, and fatal, scourge. But a ton of liver only yielded twenty milligrams of the stuff. Antibiotics to the inadvertent rescue: Pharmaceutical companies manufactured those wonder drugs from vats of fermented microbes, a technique that generated gallons of organism-soaked residues, which, researchers discovered, could be used to synthesize B12 at low cost.

Two years later, scientists at American Cyanamid’s Lederle laboratory forged the chain that linked animal nutrition and antibiotics. The Lederle team was investigating a long-time poultry nutrition mystery: when chickens rooted through bacteria-rich manure — their own or other animals’ — they laid more eggs and enjoyed lower mortality rates and less illness. The team turned its microscopes on those henhouse organisms and discovered that one of them produced a substance that resembled B12. Was B12 was the mysterious factor that distinguished animal proteins from plant-based counterparts? Team members tested that proposition by feeding animals with B12, in this case a batch created with residues from the manufacture of the antibiotic Aureomycin.

They assumed that the B12, like any vitamin, would enhance the animals’ health, but the results astonished them: The animals that ate that Aureomycin-based B12 sample grew fifty percent faster than those fed B12 manufactured from other residues. Initially team members believed that they had discovered yet another vitamin. Further tests revealed the startling truth: They’d inadvertently employed a batch of B12 that contained not just manufacturing residues but tiny amounts of Aureomycin, too.

The two-for-one, marveled a reporter for Science News Letter, “cast the antibiotic in a spectacular new role” for the “survival of the human race in a world of dwindling resources and expanding populations.” Farmers wasted no time abandoning expensive animal proteins in favor of both B12 and infinitesimal, inexpensive doses of antibiotics. Their livestock reached market weight more quickly, and farmers’ production costs dropped. Consumers enjoyed lower prices for pork and poultry.

And in the 1950s, as in the 1910s, farmers struggled to keep pace with urbanites’ needs, a struggle exacerbated by the post-war rise in the cost of both land and labor, as highways and suburban sprawl gobbled farm land and rural residents decamped for jobs in factories and offices. American foodstuffs also became essential components of U. S. foreign policy and a crucial weapon against the scourge of the fifties, communism. Who could blame livestock producers for latching on to growth-accelerating feed additives? From the farmers’ perspective, antibiotics reduced production costs, appeased price-conscious consumers, and helped save the world from the Red menace.

So it was that in the 1950s, many Americans pooh-poohed scientists’ fears that bacterial resistance could erode antibiotics’ value. And why, in 2013, many of us are still debating the need for those drugs on the farm.

Science being what it is, it’s possible that scientists and citizens alike may never agree about the links between antibiotics in feed and bacterial resistance in humans. So perhaps it’s time to focus the antibiotics debate on a different set of questions. Would a ban on antibiotics in livestock feed lead to higher prices for meat? How much price pain are consumers willing to accept? In the wake of a ban, would farmers earn a fair return for their livestock? Until and unless we wrangle with such questions, it’s likely that the debate will continue.

References:

“gasp[ed] with amazement”: “They’ve Doubled Gains With New Drugs,” Successful Farming 48, no. 6 (June 1950): 45.

“Never again”: “Antibiotics Now Proved in Hog and Poultry Ratios, They’re the Biggest Feeding News in 40 Years!,” Successful Farming 49, no. 3 (March 1951): 33.

“find themselves back”: “Infectious Drug Resistance,” New England Journal of Medicine 275, no. 5 (August 4, 1966): 277

“cast the antibiotic”: “Drug Promotes Growth,” Science News Letter 57, no. 16 (April 22, 1950): 243.

Images: Plowing in the early 20th century: Wikipedia / http://en.wikipedia.org/wiki/File:Agriculture_(Plowing)_CNE-v1-p58-H.jpg ; 1910 meat protest: Library of Congress / http://www.loc.gov/pictures/item/ggb2004004488/ ; Keep Them Fed poster: National Agricultural Library Special Collections / http://specialcollections.nal.usda.gov/imagegallery/poster-collection-image-gallery-62 ; Author photo: Ngaire West-Johnson.

Follow Scientific American on Twitter @SciAm and @SciamBlogs. Visit ScientificAmerican.com for the latest in science, health and technology news.

© 2013 ScientificAmerican.com. All rights reserved.