AI can ‘see’ people through walls using WiFi signals

Scientists have figured out how to identify people in a building by using artificial intelligence to analyse WiFi signals.

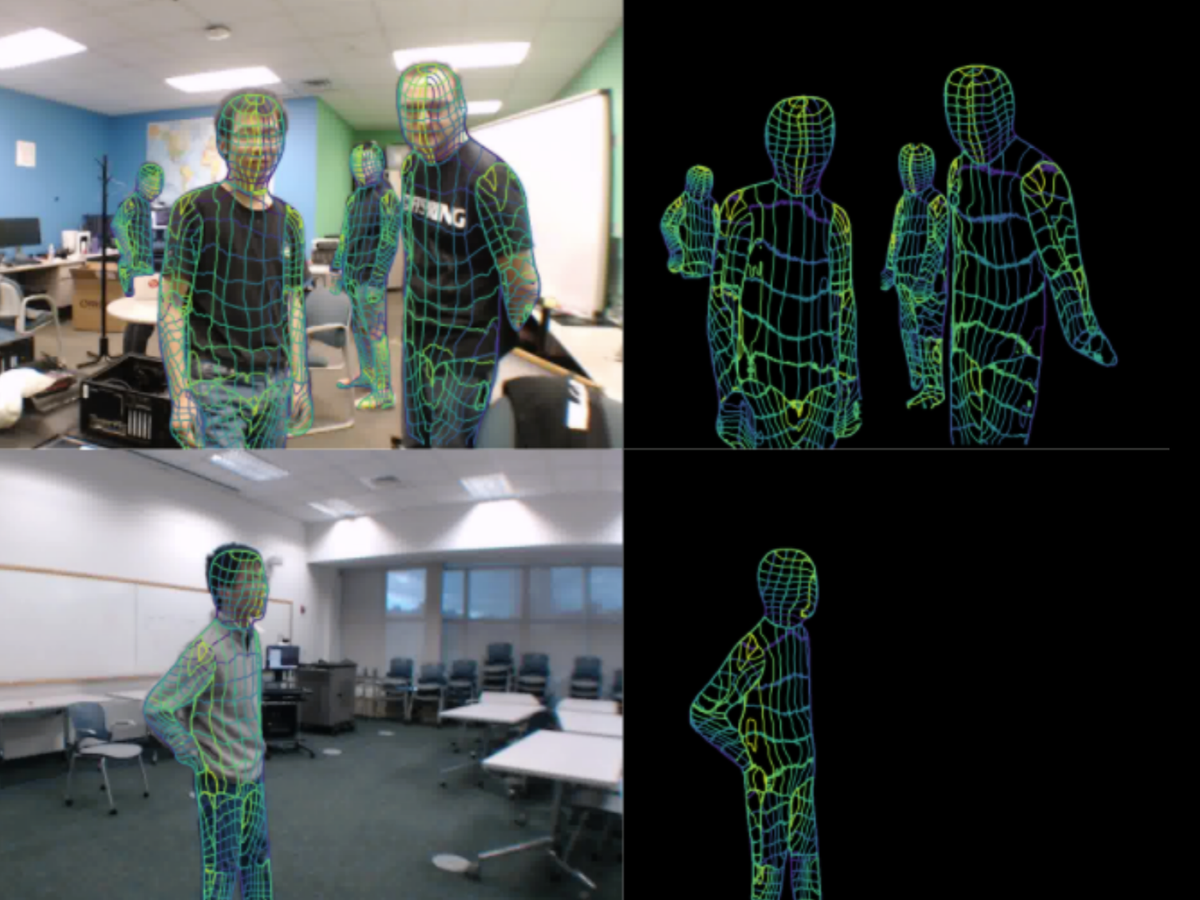

A team at Carnegie Mellon University developed a deep neural network to digitally map human bodies when in the presence of WiFi signals.

The researchers said they developed the technology in an effort to address the limitations of current 2D and 3D computer vision tools like cameras, Lidar and radars.

A pre-print study of the technology describes how the system “can serve as a ubiquitous substitute” for these other methods.

“The results of the study reveal that our model can estimate the dense pose of multiple subjects, with comparable performance to image-based approaches, by utilising WiFi signals as the only input,” the researchers wrote in the study.

“This paves the way for low-cost, broadly accessible, and privacy-preserving algorithms for human sensing.”

The researchers used a system called DensePose, which was developed by AI researchers at Meta’s Facebook, to measure the phase and amplitude signals emitted from WiFi routers.

The WiFi-based model is currently limited to 2D models of people, however the researchers hope to keep developing it in order to be able to predict 3D human shapes.

The team claims the device offers a cheap and “privacy-friendly” alternative to cameras and Lidars, as it does not provide a clear image of the subject.

Potential applications include assisting elderly people, the scientists noted, allowing them to continue to live independently.

“We believe that WiFi signals can serve as a ubiquitous substitute for RGB [camera] images for human sensing in certain instances,” they wrote.

“Most households in developed countries already have WiFi at home, and this technology may be scaled to monitor the well-being of elderly people or just identify suspicious behaviours at home.”