Bernie Sanders wants to completely ban cops using facial recognition tech from firms like Amazon

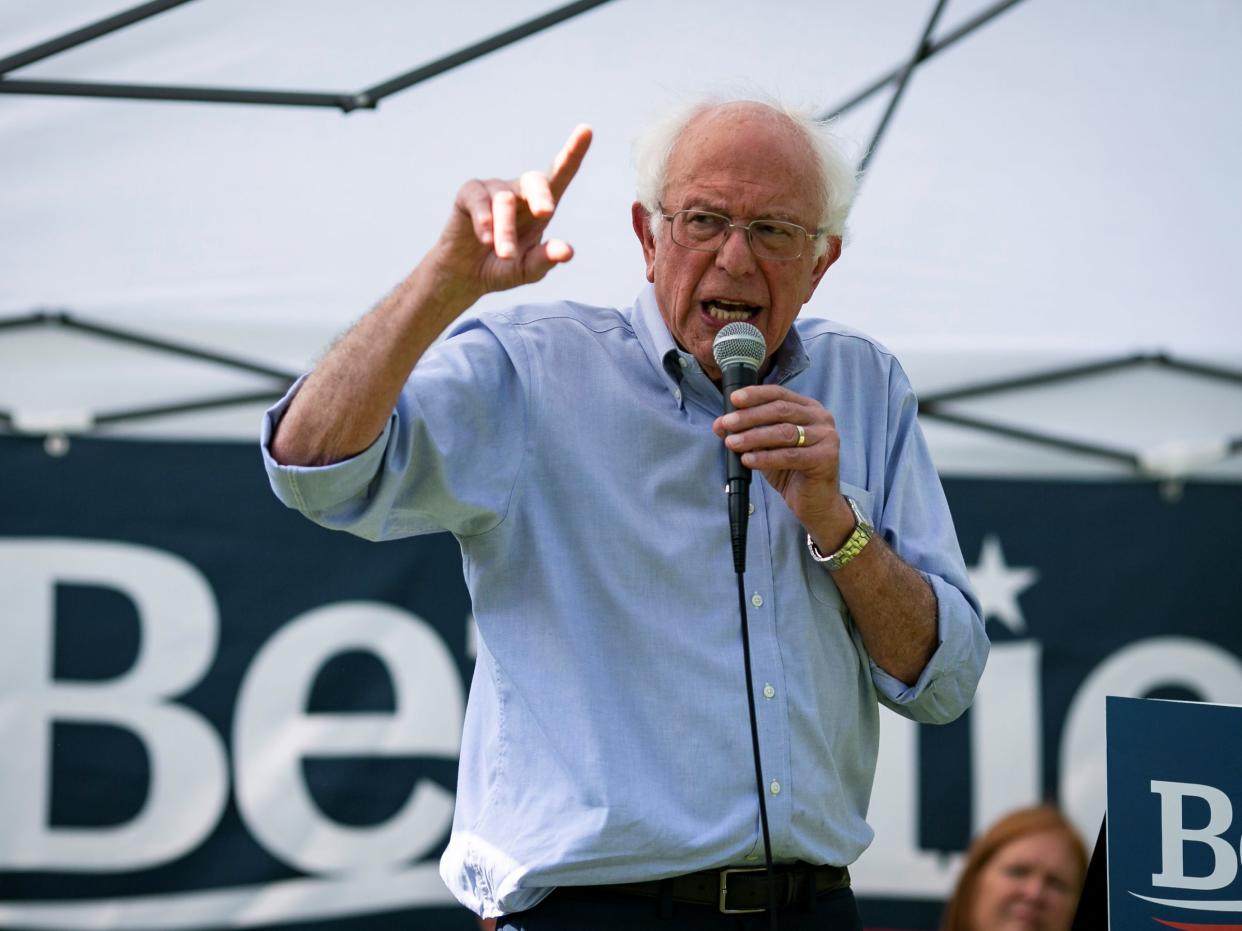

REUTERS/Al Drago

Democratic presidential candidate Bernie Sanders has made facial recognition part of his campaign platform.

As part of a sweeping set of promises on criminal justice reform, Sanders says he will ban the use of facial recognition by police.

Facial recognition tech, including Amazon's Rekognition software, is already being trialled by police forces, and has been the subject of fierce opposition from civil rights groups, AI experts, and Amazon's own employees.

Bernie Sanders has included banning the use of facial recognition by police forces in a new criminal justice reform plan published on Sunday.

The plan doesn't elaborate much on Sanders' reasons for banning the technology, but Democratic 2020 candidate said he will "ensure law enforcement accountability and robust oversight."

Facial recognition systems such as Amazon's Rekognition software are already starting to be deployed by some US law enforcement. The use of Rekognition has been opposed by civil rights organisations, AI experts, and even Amazon workers.

Read more: San Francisco becomes the first US city to ban the use of facial recognition software by police

Rekognition has been criticised by researchers for displaying ingrained racial and gender bias. A pair of researchers published a paper in January which concluded Rekognition showed higher error rates for women and people with darker skin tones.

Amazon Web Services' general manager of artificial intelligence Dr. Matt Wood pushed back against the paper, calling it "misleading" because, among other things, the researchers used an outdated version of the software.

Apart from any inherent flaws in the software, numerous reports have emerged of police forces struggling to use Rekognition properly. A Gizmodo report from January revealed police in Washington County were not adhering to Amazon's guidelines on using the software's confidence ratings (i.e. a percentage it gives on the certainty of a match). Orlando police ditched the software last month due to technical problems and a lack of resources.

Privacy International's head of its programme on corporate exploitation, Frederike Kaltheuner, previously told Business Insider that faulty or not, facial recognition should not be used by police. "When it works it turns people into walking ID cards, when it doesn't it risks incriminating the innocent who then have to prove that they are not guilty," she said.

NOW WATCH: Watch SpaceX's 'most difficult launch ever'