Should the government be allowed to use facial recognition?

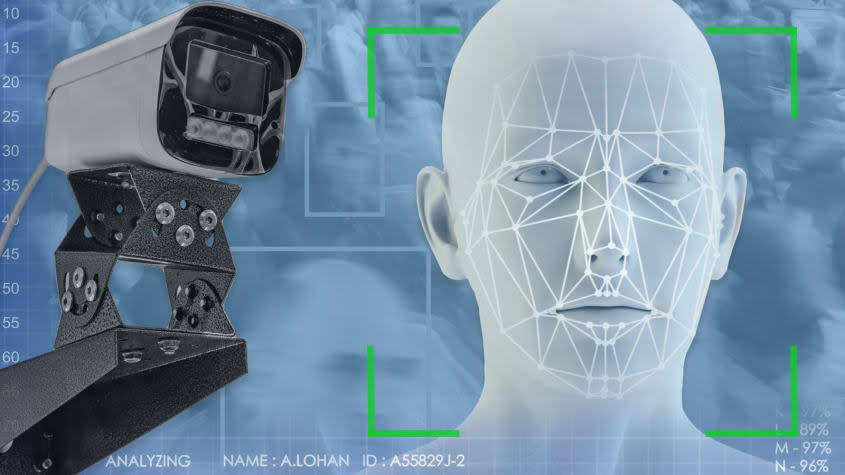

As technology advances, the use of facial-recognition software is being implemented in numerous new ways, often by government entities, for security purposes. The Transportation Security Administration has been testing out the software at more than a dozen U.S. airports, leading to both praise of the system's security features and calls from politicians for facial scanners to be removed. Facial-recognition scanners have also been used at concerts and venues like Madison Square Garden, with both positive and negative results.

While security implementations of facial-recognition software continue, the technology has reportedly found uses in more nefarious settings. A report in The Washington Post found that dozens of local governments are installing facial-recognition cameras in low-income housing, where they're "subjecting many of the 1.6 million Americans who live there, overwhelmingly people of color, to round-the-clock surveillance." In Scott County, Virginia, the Post reported that facial-recognition cameras "scan everyone who walks past them" looking for people who are banned from public housing. Years past have also seen reports of immigration agents using facial recognition to mine through state driver's license databases, and private companies like Amazon have gotten in on the action.

Controversies surrounding facial recognition are not new. However, there's no federal regulation regarding the technology, so for now, its use by government entities remains legal. But should it be? Is it time for Congress to pass legislation banning facial recognition from the government? Or is the software a sign of evolving technology that should be allowed to integrate into people's lives?

What are commentators saying?

Some in the government may not even have an understanding of how artificial intelligence, including facial recognition, even works, Rep. Jay Obernolte (R-Calif.), who has a master's degree in AI, told The New York Times. "You'd be surprised how much time I spend explaining to my colleagues that the chief dangers of AI will not come from evil robots with red lasers coming out of their eyes," said Obernotle. For those that do understand the technology, the issue "doesn't feel urgent for members," Rep. Don Beyer (D-Va.) told the Times.

As a result, the government's continued use of facial-recognition software "raises serious privacy and equity issues," Olga Akselrod and Jay Stanley wrote for the ACLU. The government also tends to outsource the implementation of facial recognition to private tech companies, which are "exempt from the checks and balances that apply to government, such as public records laws or privacy laws specifically applicable to government agencies," they added. The government could also face hurdles with the potential corruption of the technology to impart racial biases, and "similar questions need to be asked about the use of facial-recognition algorithms by the police and other public institutions," Fraser Sampson wrote for TechMonitor. There are valid questions, Sampson noted, about "the accuracy of facial-recognition algorithms in identifying faces in a crowd, especially those belonging to nonwhite individuals.

These implicit biases could also present themselves due to a history of racially motivated surveillance in the U.S., and "the current misuse of facial-recognition technologies … often reflect existing societal biases and build upon harmful and virtuous cycles," Caitlin Chin and Nicol Turner Lee reported for the Brookings Institution. Facial-recognition technologies "also enable more precise discrimination, especially as law-enforcement agencies continue to make misinformed, predictive decisions around arrest and detainment that disproportionately impact marginalized populations," they added. Chin and Lee reported that if facial technology is going to continue to be legal, there needs to be "clear restrictions on the use of surveillance technologies in certain contexts, or greater accountability and oversight mechanisms."

What's next?

Some local governments have taken steps to limit or even ban facial recognition. In 2020, the city of New Orleans outlawed its police department from using the software. Similar laws have been passed in other states, and Virginia has placed limits on its usage by law enforcement.

However, New Orleans later reversed its decision, moving to allow police officers to use facial recognition in certain situations. There were similar reversals in Virginia and other states following a rise in violent crime. So while issues with facial recognition exist, it seems, by Congress' admission, that placing restrictions on the technology is not a foremost issue for the body, and its usage looks primed to continue in the near future.

You may also like

Disney hits back against DeSantis

What the shifting religious landscape means for American politics