A huge fake-nude Telegram operation shows the big threat posed by deepfakes is revenge porn, not disinformation

A huge network on messaging app Telegram has been providing members with deepfaked nude images of women using a bot, deepfake monitoring firm Sensity said in a report Tuesday.

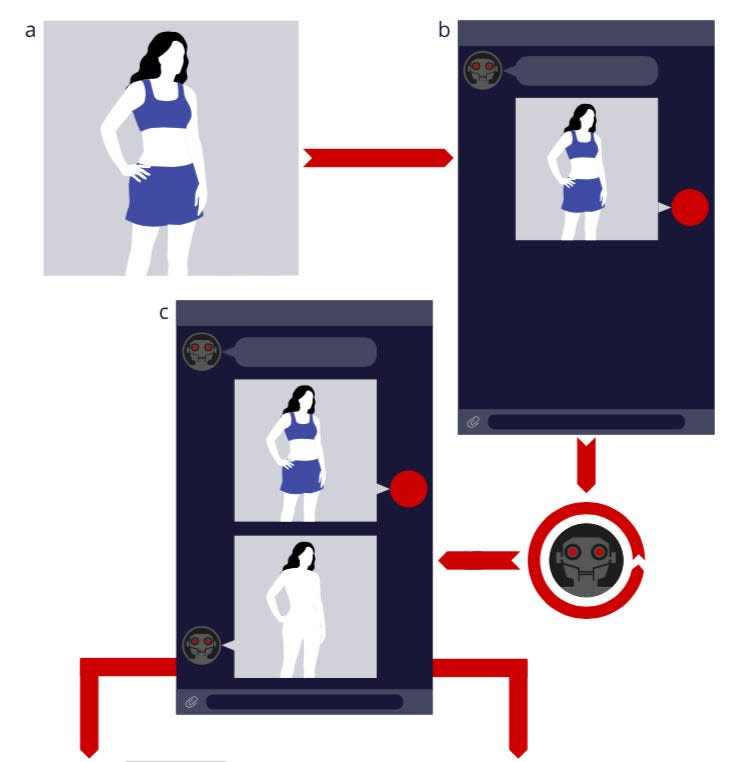

Users sent the bot a picture of a women they wanted to see naked, and the bot used deepfake technology to generate a fake nude image of that woman.

Sensity's analysis showed a staggering number of women were targeted by the bot — more than 680,000, including some underage girls.

This serves as grim reminder that deepfake technology is overwhelmingly used to create pornographic content — and can easily be used for fake revenge porn.

A chilling investigation into a bot service that generates fake nudes has highlighted that the most urgent danger internet "deepfakes" pose isn't disinformation — it's revenge porn.

Deepfake-monitoring firm Sensity, previously Deeptrace, on Tuesday revealed it had discovered a huge operation disseminating AI-generated nude images of women and, in some cases, underage girls.

The service was operating primarily on the encrypted messaging app Telegram using an AI-powered bot.

Users would send an image of a woman they wanted to see naked to the bot. The bot would then "strip-naked" the image, generating a fake nude body, and superimpose it onto the original image of the woman in question.

The bot watermarked the image unless the users paid using coins bought with real money.

Sensity's report is a grim reminder that deepfakes are not, as some feared, being weaponized at scale to distort news information — they are overwhelmingly being used to harass, humiliate, and even extort women.

A poll of the bot's users suggested most wanted to see fake nudes "familiar girls, who I know in real life."

Sensity said that "most" of the original images "appeared to be taken from social media pages or directly from private communication, with the individuals likely unaware that they had been targeted."

Over 680,000 women had their image stolen

The bot used so-called deepfake technology, specifically an open-sourced version of a deepfake software called "DeepNude," which emerged on the web in June last year.

Deepfake technology uses a kind of AI called Generative Adversarial Networks (GANs). GANs can create fake images after being trained on real ones — in this case, you show the GANs thousands upon thousands of images of naked bodies, and they are able to cook up a fairly convincing fake one, which can then be superimposed onto an image.

The bot's reach was enormous.

Seven Telegram channels with access to the bot between them racked up roughly 101,000 members by the end of July 2020. A user poll conducted on membership in those channels found they were largely users from Russia and former USSR countries (70%), though there were also members from all over the world including the US, Europe, and Latin America.

Sensity's initial analysis found at least 104,000 women had their images stolen — their fake nudes generated and shared on Telegram. The day before the report's publication, Sensity found the bot was active on another site, and analysis conducted there suggested the overall number of people who had their images processed was much higher, more than 680,000.

This shows the real danger of deepfakes

As deepfake technology has become more accessible and widespread, it has created fears it could be used to disseminate fake information and even fake news.

Sensity's report is a grim reminder that deepfakes are overwhelmingly being used to harass, humiliate, and even extort women.

Sensity reported last year that 96% of deepfake videos online were non-consensual pornography. Sensity CEO Giorgio Patrini told Business Insider that proportion has not changed.

"While in absolute numbers deepfakes are growing exponentially, the fraction of adult material is again about the same after one year," said Patrini.

The remaining 4% isn't made up primarily of videos that distort news information. "Weaponization of deepfakes for disinformation purposes does exist but it is very rare and it hardly makes the statistics," Patrini said.

He added that some of that remaining 4% is made up of humorous or parodic deepfake videos. A good example is this video that superimposed Elon Musk and Jeff Bezos' faces onto an episode of "Star Trek."

"Statistically, only a small minority of deepfakes are used for satire or entertainment on social media [...] This [report] is a confirmation of a trend where we see most of the deepfake as constituting a reputation threat for individuals," Patrini said.

Why is deepfake porn so prevalent?

One big reason that pornography has flooded deepfake tech is that it is easily monetizeable. In the case of the Telegram bot, the service was free to use, but unless users paid using "premium coins," 12 of which cost about $1.50, the images would be watermarked.

"There is a financial incentive to produce more deepfakes: Creators can make money by selling directly the content or their skill on marketplaces or, in more sophisticated cases, there are whole deepfake communities where the creator can earn money via advertisement or subscriptions," Patrini told Business Insider. "Ultimately, the fact deepfake creation can be a business is what drives the growth."

Another major motivating factor is revenge porn, the act of sharing intimate images of someone online without their consent.

A user poll posted in the "central hub" Telegram channel unveiled by Sensity suggested many users might use the tool for exactly this reason. The poll asked users which women they were interested in generating fake nudes of, and 63% of respondents answered: "familiar girls, who I know in real life."

Next most-popular with 16% was "stars, celebrities, actresses, singers," followed by "models and beauties from Instagram" with 8%.

Sensity's own analysis roughly tallied with these results. "Most of the original images appeared to be taken from social media pages or directly from private communication, with the individuals likely unaware that they had been targeted," its report said. It also found a "limited number" of images were targeting underage girls.

While a deepfake video intended to spread disinformation has to be convincing enough for people to believe it's genuine, deepfaked revenge porn doesn't necessarily have to look genuine to achieve its intended effect.

Even a crudely stitched-together image can be used to humiliate and objectify the person whose image has been stolen.

"Bot-generated images can also be explicitly weaponized for the purposes of public shaming or extortion-based attacks," Sensity said in its report, although it couldn't say to what extent images generated on the Telegram channels made their way into the real world.

Real or fake, revenge porn can be devastating

Dr Ksenia Bakina, legal officer at digital rights nonprofit Privacy International, told Business Insider the driving force behind non-consensually generated deepfaked sexual images is societal, not technological.

"The technology facilitates its spread but it is the sexist, abusive, controlling and misogynistic attitudes that ultimately fuel this type of abuse," said Bakina.

Bakina added that deepfaked images can fall into legal gray areas, meaning they are able to evade laws around revenge porn because the images aren't considered "genuine" — but she stressed that they can have the same, devastating effect on victims.

"Women who are victims of deepfakes experience both physical and psychological harms from the abuse and harassment that follows. Many women are identified online, receive malicious messages and violent rape and death threats," she said.

With bots like the one uncovered by Sensity offering cheap, fast, accessible deepfaked nude images, the disinformation potential of deepfakes seems dwarfed by the real and present danger posed by deepfake porn.

Read the original article on Business Insider